Automated Data Distribution: Delete Old Data while Loading New Data to a Dataset

You can now delete data from a dataset while loading new data using Automated Data Distribution (ADD). This helps keep data in your workspace consistent and avoid situations when new data is already in the dataset but the old data are not yet removed.

The primary use case for deleting data using ADD is to delete data during incremental load.

How can you start using the data deletion functionality in ADD?

Add the 'x__deleted' column to the data load table. The 'x__deleted' column indicates whether a specific row should be deleted from the dataset when running data load. You can choose to delete data by attribute values (attribute values in the data load table must match those in the workspaces from which you want to delete data), Connection Point, or Fact Table Grain.

Coming soon: deleting data by delete side table

Currently, you can delete data only by the data load table. We are working on adding the option for deleting data by the delete side table. Deleting by the delete side table gives you more flexibility in defining criteria by which you can delete data (by any combination of attributes). Stay tuned!

Learn more:

Delete Data from Datasets in Automated Data Distribution

Control Dashboard Filters via API Events and Methods

You can now control attribute filters on an embedded dashboard or a dashboard with embedded content using a new API event, 'Dashboard filters changed', and a new API method, 'Apply attribute filters to a dashboard'.

How are they used?

- Apply attribute filters to a dashboard: Using this method, the parent application (or the embedded content) can send attribute filters and their values to be applied to the dashboard.

- Dashboard filters changed: This event is triggered when filters on the embedded dashboard (or the dashboard with embedded content) have changed.

Learn more:

Embedded Dashboard and Report API - Events and Methods (look for 'Apply attribute filters to a dashboard' and 'Dashboard filters changed')

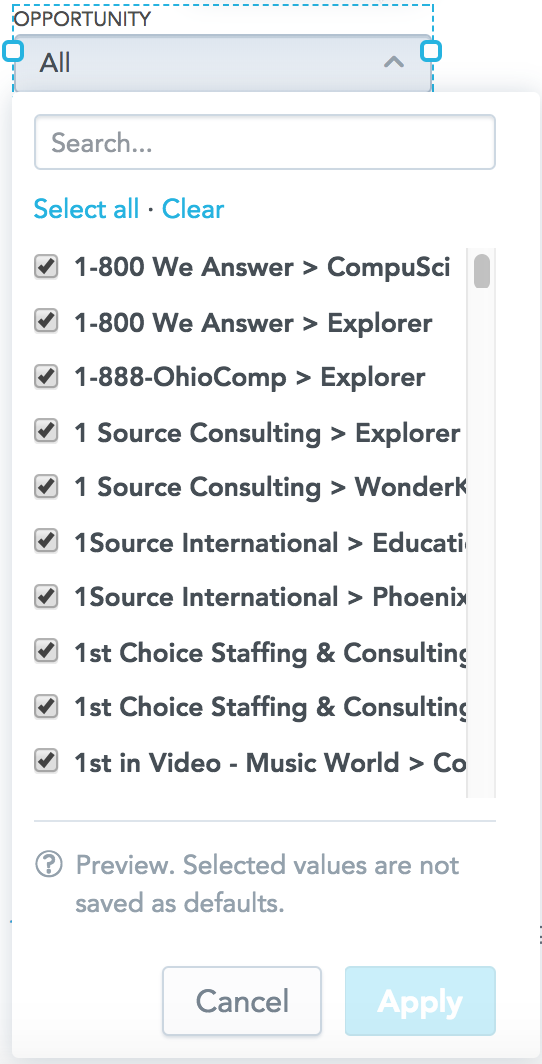

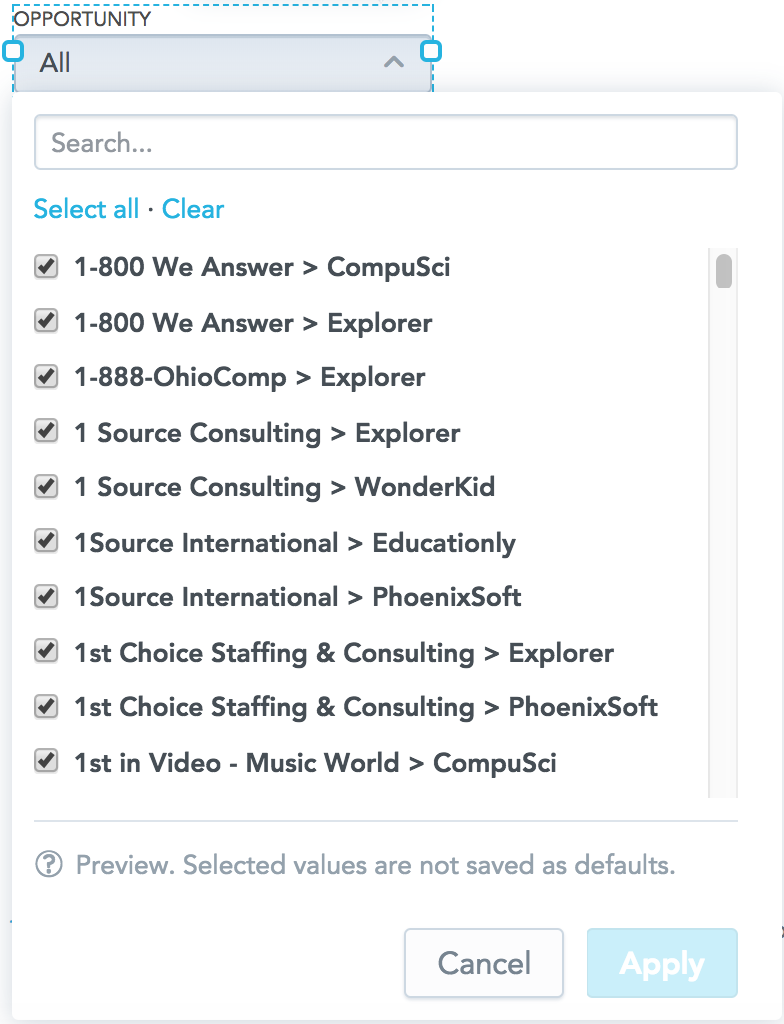

Resizable Drop-down Dialog of Attribute Filters

When you are adding an attribute filter to your dashboard, you can now resize the filter drop-down dialog to better fit the filter values. The drop-down dialog width that you configured will become default for all dashboard viewers.

To resize the dialog, manually drag the dialog border.

The default width of the drop-down dialog is 240 px. You can widen the dialog up to 400 px.

When the attribute value names are long and especially when you have multiple value names that have a similar beginning (for example, International Financial Company - Account 1, International Financial Company - Account 2, ..., International Financial Company - Account x), resizing the dropdown dialog helps you make all the attribute value names visible in the dropdown.

This is how you can resize the drop-down-dialog (the dashboard is in edit mode): ==>

==>

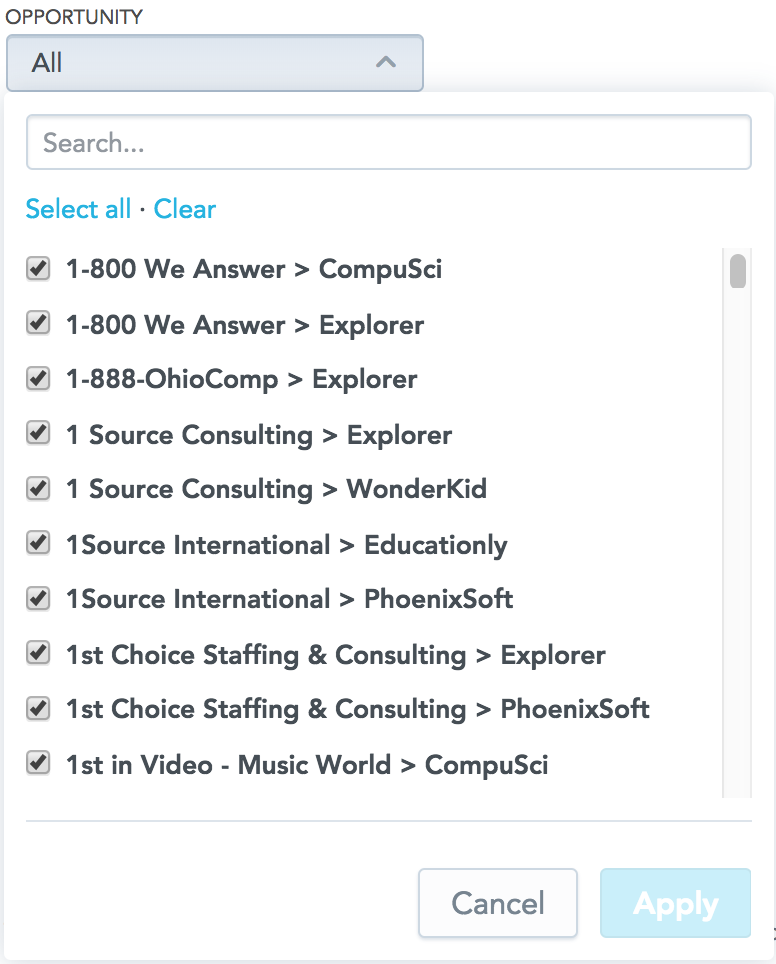

This is how dashboard viewers see the attribute filter (the dashboard is in view mode):

Learn more:

Filter for Attributes

Data Warehouse JDBC Driver 3.0.2 Available!

We have released version 3.0.2 of the Data Warehouse JDBC driver. Download it from the Downloads page at https://secure.gooddata.com/downloads.html.

NOTE: If you are a white-labeled customer, log in to the Downloads page from your white-labeled domain:

https://my.domain.com/downloads.html

What's new in this release?

- The Data Warehouse JDBC driver now runs on Java 8.

NOTE: Java 7 is no longer supported. Make sure that you have Java 8 installed. - The logging mechanism has been enhanced.

- The overall performance has been improved.

What is the enhanced logging mechanism good for?

The enhanced logging can help you troubleshoot issues, examine performance, or audit the driver usage.

To enable the logging, choose one of the following methods:

- Add the JDBC driver logging property, and set it to the path where the log will be written:

log.path=/path/to/write/log

Example:log.path=/tmp/jdbc.log

- Add the log path to the connection string as a parameter:

jdbc:gdc:datawarehouse://hostname:port/gdc/datawarehouse/instances/dw_instance_id?log.path=/path/to/write/log

Example:jdbc:gdc:datawarehouse://secure.gooddata.com/gdc/datawarehouse/instances/abc123def456?log.path=/tmp/jdbc.log

Learn more:

Download the JDBC Driver

Enable Logging for the JDBC Driver

Variables: Limit for Number of Users Removed

We have removed the limit of max 500 users that you can assign a variable to. You can now assign as many users to a variable as you need.

Learn more:

Define Filtered Variables

Define Numerical Variables

New API - PGP SSO POST

Implementation of POST in PGP SSO as a recommended method to authenticate. The GET method will be deprecated on the GoodData platform beginning July 2018.

Learn more:

PGP Single Sign On

API

REMINDER: Add Mandatory User-Agent Header to API Request

Starting from March 31, 2018, the GoodData REST API will require every API request to contain the User-Agent header. Any API request without the User-Agent header made after March 31, 2018, will be rejected.

The User-Agent header must be in the following format:product/version (your_email@example.com)

where:

-- product is your product name; can include your company name; must not contain spaces or slashes ( / )

-- version is your product version; must not contain spaces or slashes ( / )

-- your_email@example.com is the email of the contact person responsible for the product in your company. The email is optional if you have specified your company name in product.

Examples:Insights-Provisioner/1.0 (gd_integration_person@acme.com)

Acme-Company-GDIntegration/0.9

Action needed:

If you use a tool for handling API calls or a custom library for integrating with the GoodData REST API, update them to include the User-Agent header in every API request.

Although the API change is planned for the next year, we strongly recommend that you do so as soon as possible.

If you have any question, please contact GoodData Support.

Upcoming API Updates

The following API updates will be implemented:

There will be a backward incompatible change on /gdc/md/<pid>/userfilters API which serves data permission assigning to users.

This command can ONLY be used by the owner of domain that the <login> belongs to. After release you may receive "Unknown user or user URI is wrong." when you use <login>. If this happens, use <user-id> instead.

A separate notice will posted in these release notes when it occurs.